Townsend Labs is now part of Universal Audio.

This is your new home for Sphere Modeling Microphones.

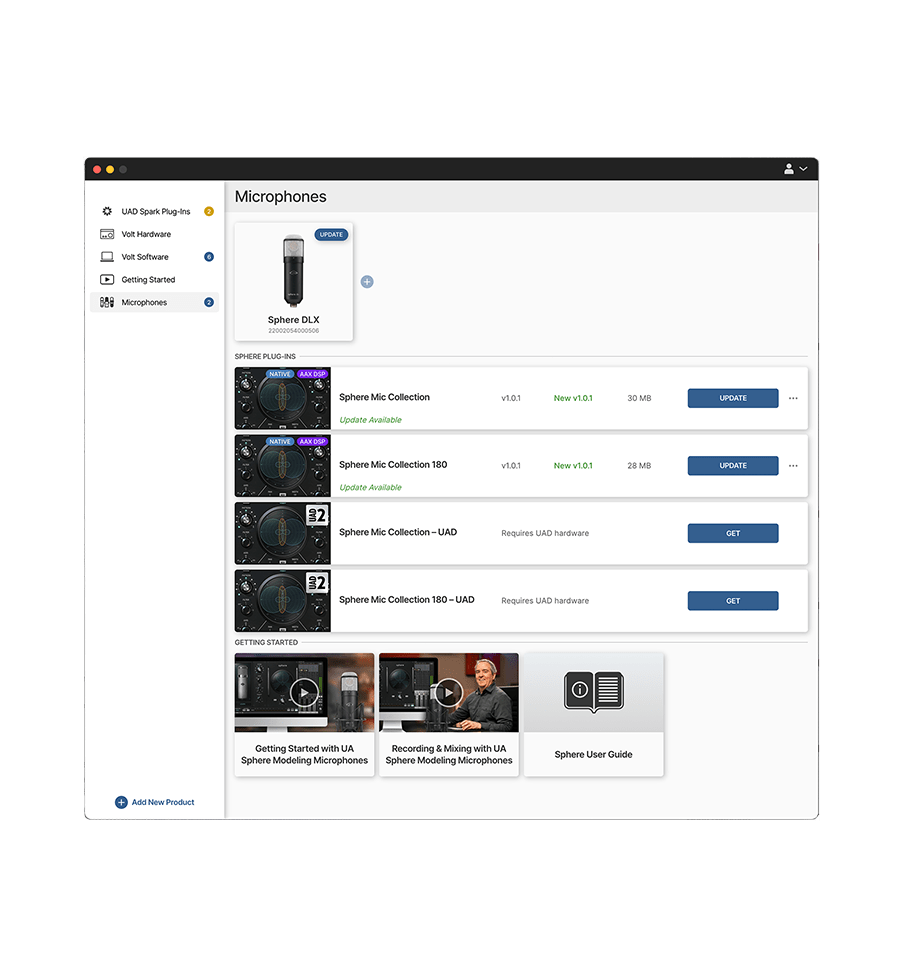

Register to Get your Included Software

Download UA Connect to get your Sphere software, including the world's best emulations of legendary mics from Neumann, Telefunken, AKG, Sony, and more.*

Get the Sounds of the Greatest Mics ever Made

The Sphere LX and DLX modeling microphones give you the tones of iconic ribbon, condenser, and dynamic microphones used by everyone from The Beatles and Beyoncé to Radiohead and Frank Sinatra.

Expand your Mic Locker with Sphere UAD Plug‑Ins

Get jaw-dropping, hand-picked mics from Ocean Way Studios and legendary UA founder Bill Putnam Sr., and record with the same mics used on classic albums by Ray Charles, Elvis Presley, Stevie Wonder, and more.

World Class Mics to Capture your Best

Explore our family of professional microphones, designed to inspire singers, podcasters, engineers, and creatives of all types.

Learn More

*All trademarks are property of their respective owners and used only to represent the microphones and sound treatment modeled as part of the UA Sphere Microphone Software.